Claude for Lawyers: The Real Question Isn't Which AI to Buy

Harvey, Legora, Anthropic, and the AI-native firms betting against all of them. Here's what the money says about where legal is headed.

Katon Luaces

Welcome to Attorney Intelligence, the weekly newsletter from PointOne where we break down the forces reshaping legal from the inside out.

Anthropic launched a legal plugin for Claude in February 2026. Claude can now draft a contract memo, triage a stack of NDAs, and knock out the performance review you've been avoiding since Monday. It costs $20 a month. The leading legal AI platforms charge twelve times that for a program that runs on the same underlying intelligence.

Claude’s legal plugin isn’t quite matching the value provided by enterprise platforms in the form of governance stacks, DMS integrations, and credible audit trails. But Claude’s low price point means the economics will change for many solo practitioners and SMEs.

Evaluating what Claude offers lawyers in comparison to enterprise products like Harvey and Legora tees up a more difficult question than those most buyers are asking: Where does the value add actually sit? At the end of the day, the value is not in pricing, but rather in the trust and governance architecture. This week’s newsletter is an attempt to think through that question carefully, with the intention of reframing buyers’ demands from every new AI tool.

In this week's Attorney Intelligence:

What Claude can do for lawyers

Legal AI pricing at a glance

Where Claude excels and where it falls short

The pitch for purpose-built AI

Next-generation SaaS

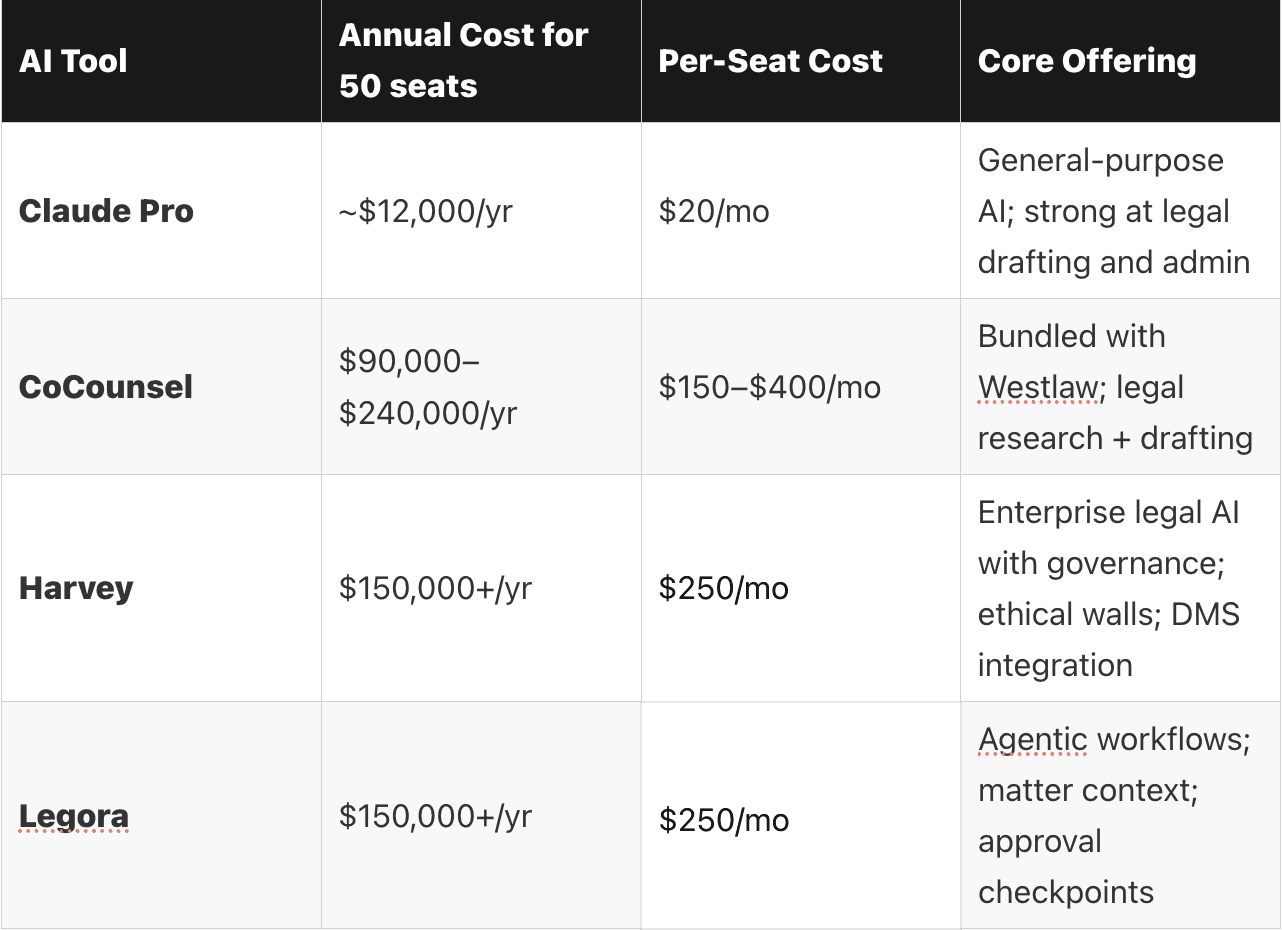

Legal AI Pricing Compared: Claude vs. Harvey vs. CoCounsel vs. Legora

Built on top of the same foundational models that power general-purpose AI like Claude are purpose-built products for the legal industry.

At the top of the legal AI market sit Harvey and CoCounsel. These companies offer deep law firm integrations and the full governance stack in exchange for premium pricing. Legora is close behind, pushing hard on agentic workflows. Spellbook occupies the mid-market.

And then there’s Claude at $20/month, doing a surprising amount of the same work.

Legal AI Pricing at a Glance

Estimates based on publicly available pricing and practitioner reports. Actual costs vary by firm size and contract terms.

Despite sitting on (mostly) the same models, these are genuinely different products with different capabilities. Recently, however, they have become less differentiated in the context of legal work. Practitioners are discussing this openly now. In legal tech forums, comparing Harvey, Legora and others is a recurring topic of conversation, driven not by vendor marketing but by individual attorneys who’ve used these tools side by side.

With the gap in output quality narrowing much more rapidly than the price differential, it’s worth taking a hard look at Claude’s capabilities and shortcomings.

Claude for Legal Work: Where It Delivers and Where It Falls Short

Claude is genuinely good at legal work. Contract review, memo drafting, summarizing case law, generating complaint language from prior filings — lawyers report getting at least 90% of the way there before they need to touch anything.

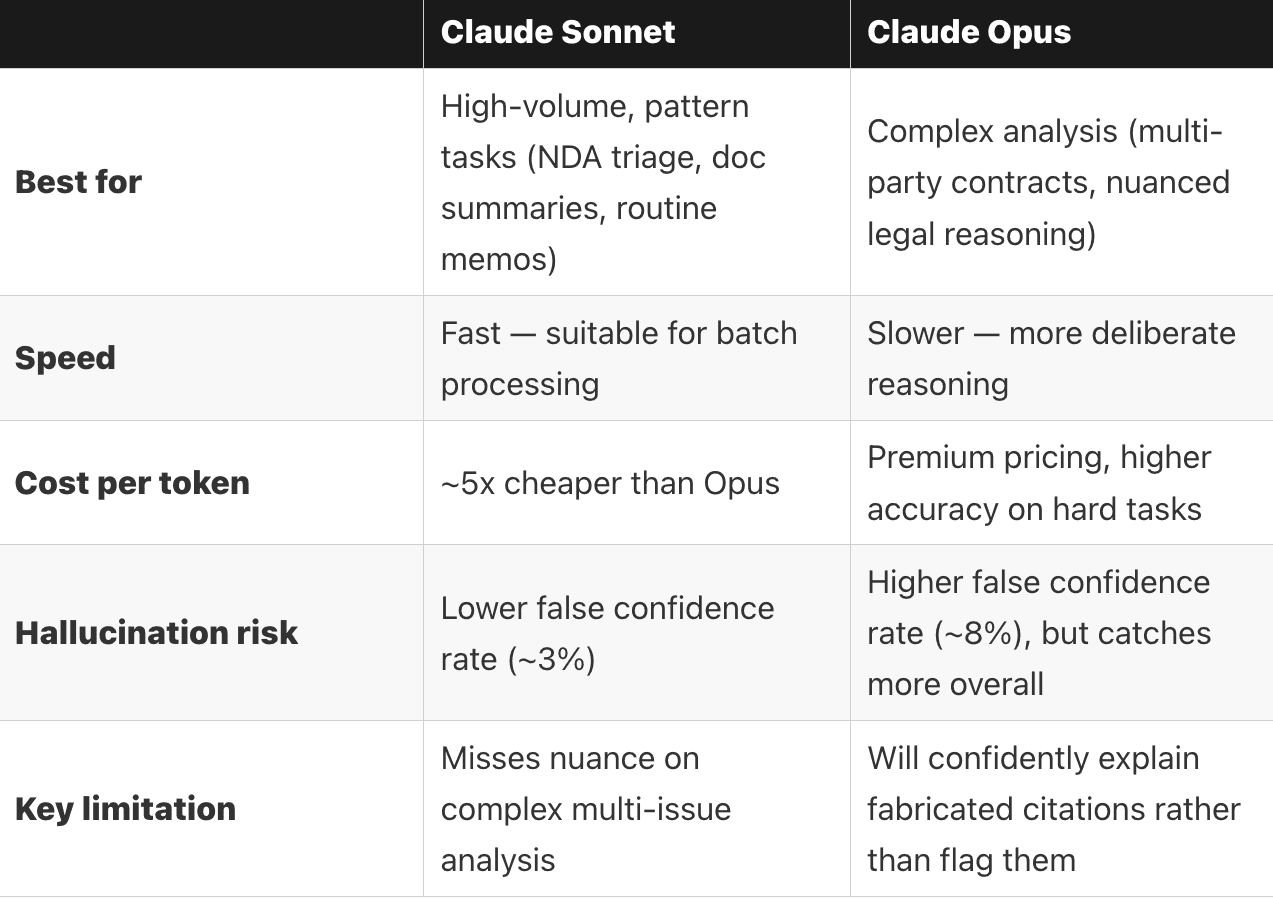

Two of Claude’s models are well-suited to legal tasks: Sonnet and Opus. The former is particularly adept at managing high-volume work, such as reviewing NDAs and service agreements. The latter can handle a higher level of complexity, excelling at multi-party contracts and outlines for litigation teams. Based on token use, those models come in at slightly different price points — but well below the purpose-built legal AI tools.

Claude Model Comparison for Legal Tasks.

Both of Claude’s models are good at discrete tasks that rely chiefly on intelligence. But on tasks that require an element of judgement — such as interpreting a sophisticated legal argument with nuance — they fall short. That means Claude can amplify a lawyer’s ability but not replace them. When you feed a frontier model a fabricated legal citation, it won’t flag the error; instead, it will explain why that fake statute is a logical extension of existing law.

Bottom line, lawyers still need to know what good looks like.

Purpose-Built Legal AI: What You Get Beyond the Model

Agentic Workflows and the Governance Stack

Legal AI companies Harvey and Legora have built their products on top of models similar to Claude. How do they justify their price tags?

Legora makes the sharpest version of the counter-argument. In their 2026: Year of Agents piece, the pitch isn’t “we have a better model.” It’s that a mature legal AI platform enables end-to-end automation by providing both the firm-specific context and structure to emulate existent workflows within the law firm.

The same deployment infrastructure that inscribes this institutional knowledge on the AI system also guarantees a higher degree of security. Structured approval checkpoints, complete audit trails, and tight DMS integrations keep humans in the loop and prevent data leaks. You don't get any of that by opening Claude and typing a prompt.

The Trust Problem and Who's Actually Solving It

Shadow AI is already inside law firms. Partners and associates are pasting client documents into Claude or ChatGPT on personal accounts without telling IT. It's the reality that 273 Ventures and others have been documenting, and it's exactly the kind of behavior that triggers ABA Model Rule 1.6. Purpose-built platforms are implicitly selling a solution to this — enterprise deployment, centralized billing, audit logging, private networking. The pitch is “we make the AI safe to use at scale."

But do you need a full platform to solve the trust problem? Truth Systems, a California-based startup advised by Stanford’s Dr. Megan Ma, is taking a different approach. Their product Charter sits between the lawyer and whatever AI tool the firm uses — Claude, ChatGPT, or something else — and converts firm policies and client engagement letters into real-time guardrails that block non-compliant prompts before they’re ever sent. No full platform required. Just the trust layer. They raised $4 million in seed funding in late 2025, and the bet is clear: You don’t need to replace the model or the wrapper. You need to own the trust layer between them.

The question is whether that wrapper is a durable product or just better packaging around the same underlying models.

So Where Does the VC Money Think This Is Going?

Let’s follow the money for a second. Harvey just raised $200 Million at an $11 billion valuation. Legora raised $550 Million at $5.5 billion. Between them, that's $16.5 billion in market cap for companies whose core product is built on someone else's model.

That's $16.5 billion riding on the assumption that you need a separate product on top of the foundational model. And the question every managing partner is going to ask sooner or later: Why should I pay for another piece of software when I can just use Claude? Anthropic clearly isn't trying to compete with these companies — they're encouraging adoption through MCP integrations, plugins, and an open ecosystem. But that openness is exactly what creates tension. If Claude can access your data, run your workflows, and connect to your tools directly, the value proposition of every layer sitting on top of it has to get sharper.

Harvey and Legora are increasingly highlighting the features that make their products at once customizable and highly secure — not the output quality. This likely signals that these startups are pursing the model recently outlined in Sequoia’s piece Services: The New Software, where the VC giant bets that the next wave of billion-dollar companies won’t just sell tools, but rather design and implement workflows that are uniquely tailored to each customer. In legal, that might be the path that actually earns the valuation.

Legal Bytes

Courts Are Done Being Patient with AI-Generated Legal Filings. Sanctions accelerating: MyPillow lawyers fined, Nebraska attorney referred for discipline, Georgia Supreme Court incident in March.

OpenAI Sued for Practicing Law Without a License. Nippon Life filed in federal court in Illinois. ChatGPT helped a policyholder draft litigation documents in an active case, cost Nippon $300K in legal fees to respond. $10M in punitive damages sought. Also, read Stanford Law's analysis framing it as product liability.

Florida Attorney General Launches Investigation into OpenAI, ChatGPT. The tech giants are facing increasing scrutiny for their products’ impacts on children.

Want to learn how PointOne can help you transform your firm with AI?

Book a demo to see how PointOne supports compliance automatically — helping both timekeepers and billing teams adhere to firm-wide standards.

Thanks for reading and I'll see you next week,

Katon